Features

Prevent SAP Outages

Can you afford your SAP HANA system to be down?

An SAP HANA system downtime can have many inconvenient consequences for a company: Business interruptions, data loss and high costs for recovery measures. Therefore, the risk of such an outage should be kept as low as possible.

In this article, we will give you some insights into our projects and explain how internal developments affect the utlization of SAP systems. We give you some advice on what you should be aware of to avoid downtime and minimize the risk of your business being affected.

An SAP system based on an SAP HANA database is no longer rare. But there are many details to consider before, during and after the migration to SAP HANA. With the recommended operating system settings, SAP specifies the requirements for SLES 12 and RHEL 7 on Intel-based hardware in the respective SAP NOTES 2205917 and 2292690. These must be followed to ensure proper operation.

Our analyses in customer projects

After the successful migration to SAP HANA, we have already supported many customers with objective analyses of performance as well as subsequent recommendations for action to optimize their systems’ performance. At this point, it therefore makes sense to first give you an insight into our analyses before we go into our recommendations.

For example, we analyzed the cloud platform of a large German hoster. The conditions: SAP systems are operated for international customers at several locations around the world, and individual customers were increasingly complaining about slow system response times. The goal of the project was to first verify whether there were any anomalies, to what extent the slow response times were caused by the hoster’s infrastructure. If so, recommendations for improvement were then to be developed.

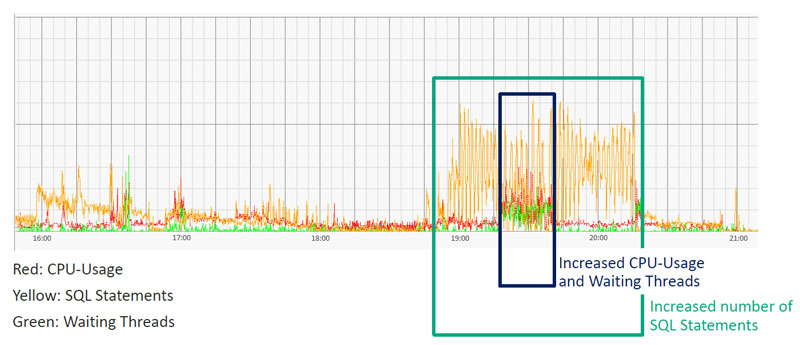

SAP HANA performance with typical CPU utilization

In the image above, you can see a typical CPU utilization of an SAP HANA database. Within the green box, the number of SQL statements (yellow) increases. Between 19:15 and 19:45 (blue box), the CPU utilization (red) then increases slightly and the number of waiting threads (green) increases. However, this resource load is completely acceptable, since the percentage CPU utilization did not increase significantly over this short period of time. However, we could also regularly measure significant increases in CPU utilization, as can be seen in the following graphic.

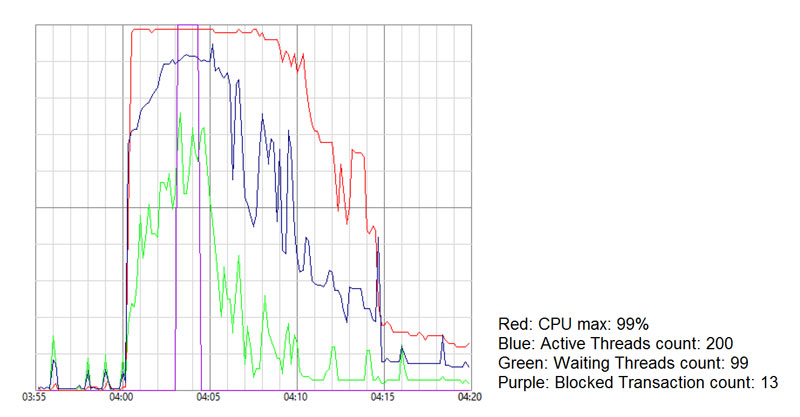

SAP HANA performance with high CPU utilization

The graph shows the CPU utilization (red) at its peak between 4:00 am and 4:08 am at 99 percent. The number of active threads (blue) and waiting threads (green) correlate with this. There is a resource shortage in this short period of time, so the number of Blocked Transactions increases to 13. We then analyzed the SQL statement, which contributed to a CPU utilization of 99 percent. Experience shows that there is often a resource shortage in SAP systems due to in-house developments or their SQL statements.

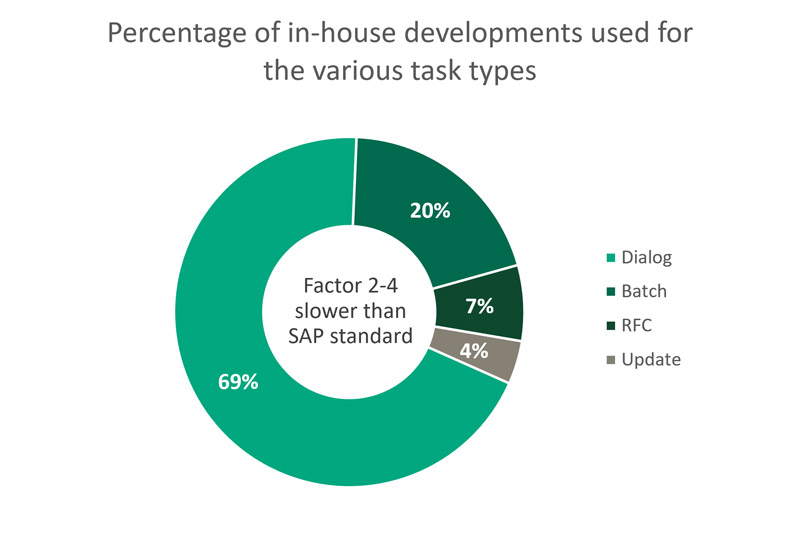

In-house developments vs. standard transactions

In his 2011 publication “Metrics for in-house developments in SAP ERP systems”, Professor Doktor Mielke showed that in-house developments are slower than standard transactions by a factor of 2 to 4. 69 percent of in-house developments are carried out in dialog. The diagram below illustrates the results of his publication.

See “Metrics for in-house developments in SAP ERP systems,” retrieved from https://www.user.tu-berlin.de/komm/CD/paper/030632.pdf.

Valuable employee time is lost due to the system’s slow response time – nearly two working days per employee per year. And how can a resource shortage on SAP HANA be avoided?

For this, it is important that the developers are trained and that the proprietary developments or SQL statements are optimized specifically for SAP HANA. After the migration to SAP HANA, it is often noticeable that the response times of in-house developments have increased, although it was assumed that everything would become faster with SAP HANA. This is exactly not the case with most in-house developments that run on SAP HANA without optimization.

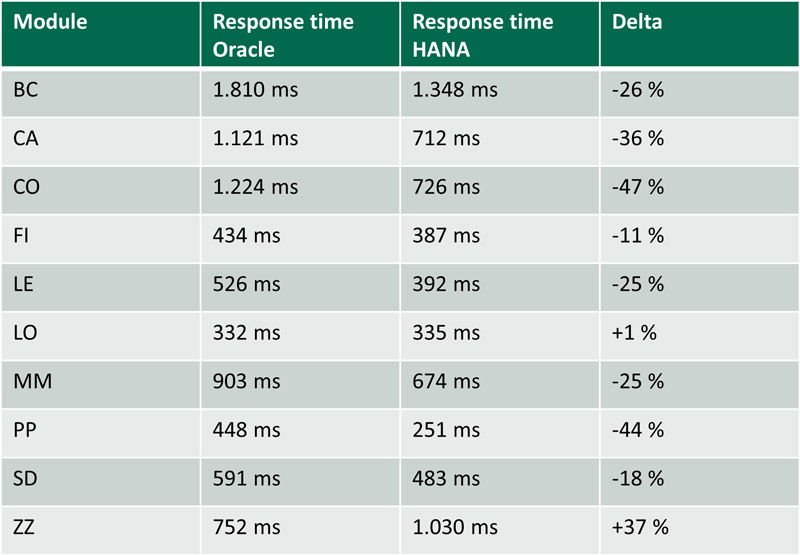

The following image shows the response times to Oracle split according to the individual modules and after the migration to HANA: The response time of in-house developments (module ZZ) has become slower on average.

Response times Oracle vs. HANA per module

How to analyze SQL statements

For example, an “Expensive Statements Trace” can be activated in SAP HANA Studio for analysis. This can be found after “Log On” to the respective system in the Administration Console under the “Trace Configuration” tab. To activate, set the status to “Active”.

Based on the data collected from then on, the SQL statements can be analyzed. Either in SAP HANA Studio under the “Performance” tab and then “Expensive Statements Trace” or directly via SQL Statements. SAP provides a useful collection of SQL statements for download in SAP NOTE 1969700. Among them are text files with SQL statements, for example HANA_SQL_ExpensiveStatements.txt.

Another option for special analysis of your custom developments is the Custom Development Management Cockpit (CDMC) in SAP Solution Manager. You can access the CDMC via the transaction CNV_CDMC.

In addition, with SAP HANA Workload Management from SPS 08, there is the option to manage resource utilization. SAP HANA Workload Management is one possible measure for providing Quality of Service. By implementing Quality of Service, resources can be managed to consistently provide and guarantee performance to all systems at a specified quality. For example, if multiple systems are using the shared SAP HANA database, one system may be utilizing SAP HANA resources to such an extent that another system shows very slow response times due to the very limited resources left. In the above example of high CPU utilization, SAP HANA Workload Management can be used to regulate the CPU utilization of the one system with the non-performing custom development. To learn how to configure Workload Management in SAP HANA, see SAP NOTE 2222250.

Why is Quality of Service or Workload Management important?

It is important that every customer – whether internal or external – receives the service they have been promised. This is what OLAs or SLAs are for. Due to the natural limitation of available resources, performance must be regulated for all customers in order to be able to provide the promised service. If there is no Quality of Service, the case described at the beginning occurs – customers complain about slow response times of the system.

The simple reason for this in the case described above was that each customer could use as much power as they wanted on the hoster’s shared infrastructure, and one customer was using so much of the entire infrastructure that there were not enough resources available for the other customers, which resulted in a resource shortage in the response time of the systems. In the worst case, this could even lead to a system outage. There is a study on the resulting system downtime costs, carried out by Techconsult on behalf of HP:

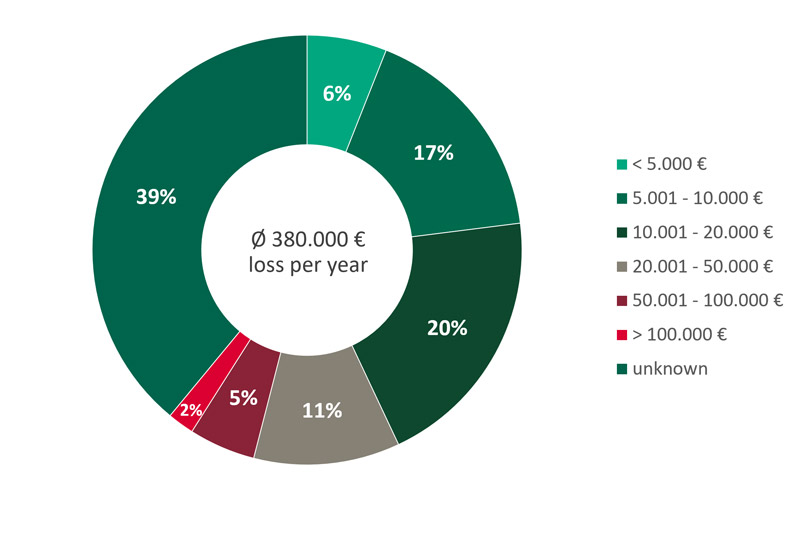

Adapted from the Techconsult study “German SMEs need better IT support services” commissioned by HP.

The diagram summarizes the results of the study: On average, system failure costs in a medium-sized company amount to 380,000 € per year. This is based on an average of 4 outages of 3.8 hours each and 25,000 € system downtime costs per hour. It should be noted that, according to the study, the amount of damage varies depending on the size of the company. In addition, 39 percent of the companies are not even aware of how high their damage is in the event of a system failure lasting one hour.

Conclusion: Considering the amount of damage, it is definitely worth thinking about the introduction of a Quality of Service based on OLAs or SLAs.

Discover how REALTECH can support you. With our Managed Services, we ensure reliable operation of your SAP systems.